Jin's Daily AI Trivia: Apple AI Releases Diffusion-Based Coder Model

Jin’s Daily AI Trivia: Apple AI Releases Diffusion-Based Coder Model

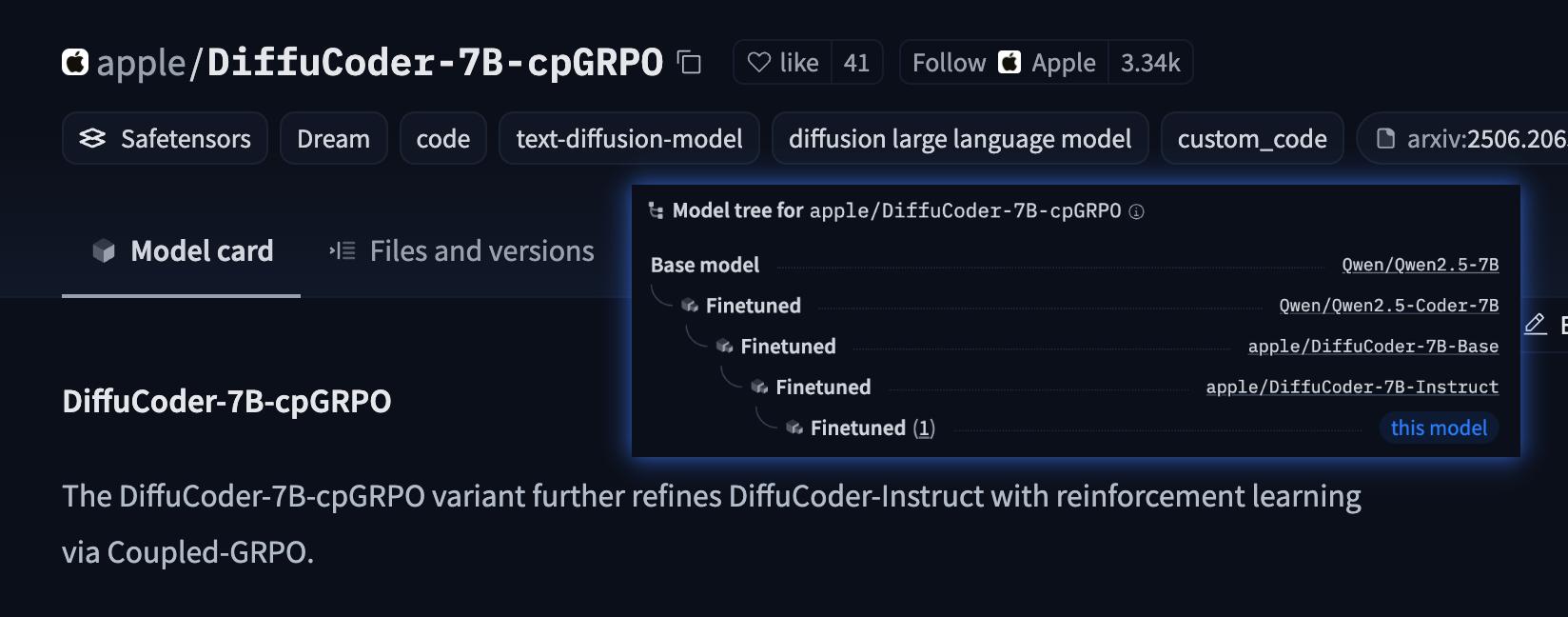

Summary: Don’t bother using it — it’s not great and mainly intended for research purposes. (It can’t even outperform Qwen2.5-Coder-7B, which is its base for fine-tuning.)

This is one of the rare open-weight models based on diffusion LLM techniques, and uniquely, it’s applied in a coding context — where diffusion models typically shine more in high-speed generation tasks like speech.

Check out Apple’s research paper for some interesting insights:

- Diffusion-based LLMs (dLLMs) show bias due to the left-to-right logic of natural language.

- dLLMs perform poorly on coding tasks compared to math-related tasks.

- Temperature settings impact dLLMs much more than they do in autoregressive LLMs, especially in terms of output sequence.

Other dLLM models worth mentioning: LLaDA-8B, Gemini-Diffusion, Dream7B, and the so-called commercial-scale Mercury.

Why dLLM? Diffusion models can be faster than transformer-based models when generating outputs — (Diffusion more optimize for parallel processing tasks)

hope you learn something new today!!! See ya!!