Jin Daily AI Trivia – OpenAI’s Real Open GPT-OSS is Finally Here!

Here’s what you need to know:

Apache 2.0 License – You can fine-tune, modify, or use it however you want. It’s one of the most open-source-friendly AI model licenses out there.

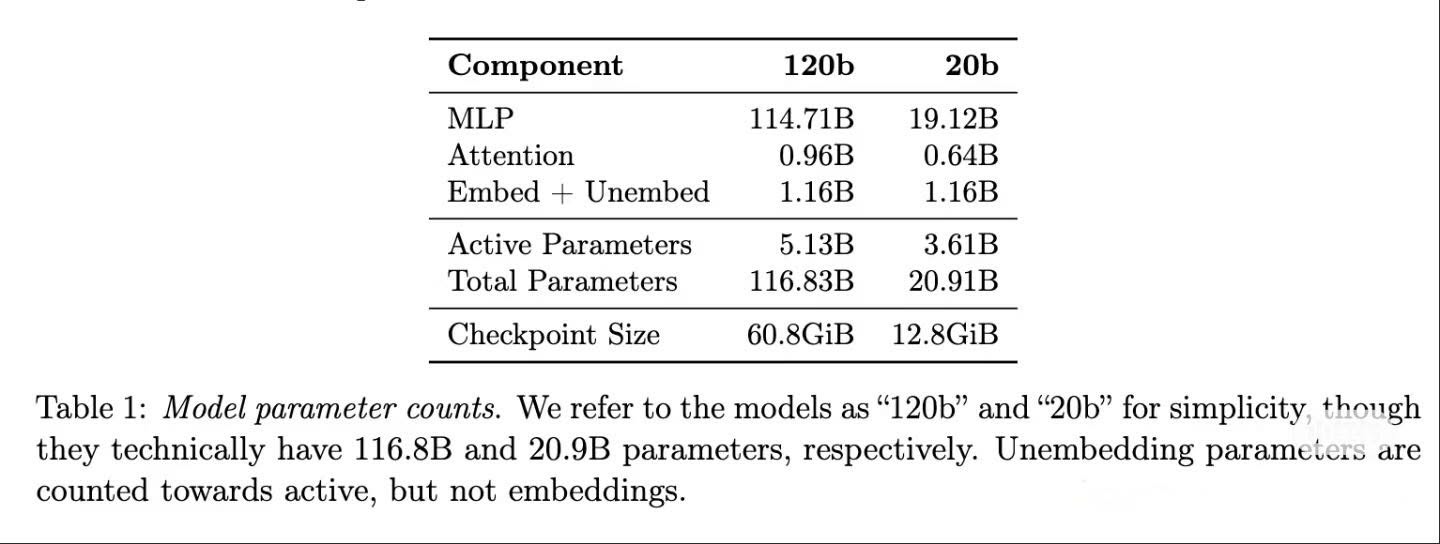

Crazy MoE Design – 120B-A5B??? It only activates 5B parameters per run for the large model! Comes with CoT and reasoning mode switches too.

Minimal Hardware Required – • gpt-oss-120b runs on a single 80GB GPU (like H100). • gpt-oss-20b runs on a single 16GB consumer GPU (like 5080). • Runs surprisingly well on a 24GB Mac too!

Model Comparison – • 120B is said to be comparable to GPT-4 mini (o4-mini). • 20B is roughly on par with o3-mini.

Built for Tools (Agent-Ready) – It supports 3 reasoning tool-use switch: • Low: Minimal tool use • Medium: 1–2 tools + verification • High: Plan → Execute → Verify + rollback & tool use

⸻

The Not-So-Good:

Context Window – Only 128K tokens. It was extended internally from 4K using Yarn.

Expert Count – 120B has 128 experts, but the 20B version only has 32.

Hallucination Rate – Pretty high. The 20B version scores 0.914—significantly worse than GPT-4 mini.

No Mobile-Sized Model Yet – Still waiting for a version optimized for phone. Sam say is coming.

⸻

Running some tests now—will share my findings soon!

Hope you learned something new today!! See ya!