Jin Daily Trivia:

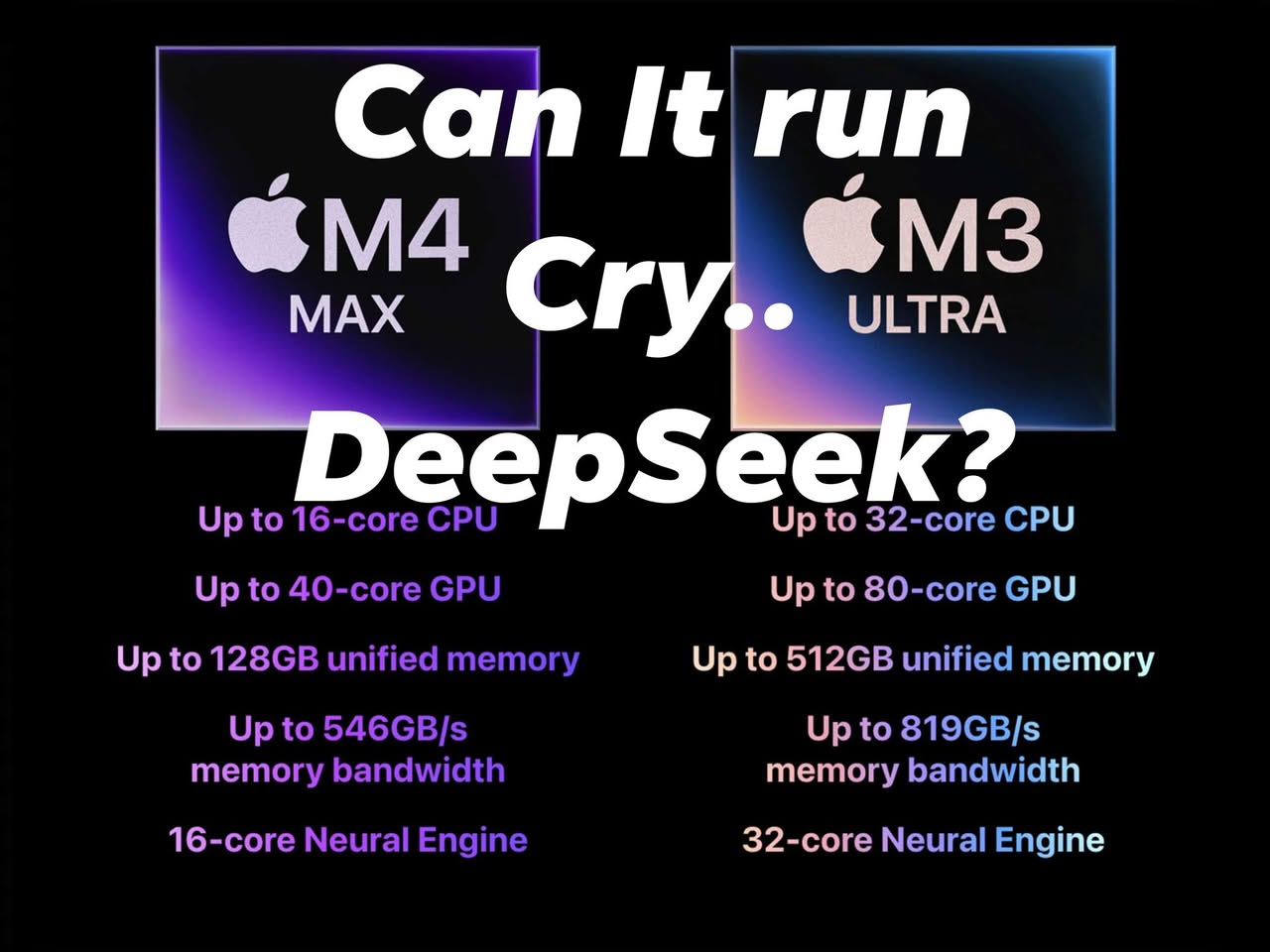

For people who want a simple answer: Yes, a single Mac Studio M3 Ultra machine can run the full DeepSeek-R1 671B model.

Specs: • DeepSeek R1 - 671B Q4 (should have very little loss) • Q4 Model startup requires around 325GB RAM, Q8 needs 650GB • When in use, DeepSeek’s MoE structure only actively searches around 37B parameters, which uses about 19GB RAM. Remember to keep some memory overhead for KV cache. • Actual Q4 with full 32k tokens will use around 450GB, well below the 512GB Mac Studio M3 Ultra limit.

With a memory bandwidth of 819GB/s, we can expect around 20-25 tokens/s generation.

So yes, this is the cheapest self-hosted FULL MODEL DeepSeek-R1 671B you can get in the market.

How much? 37,336.50 MYR with an education discount.

This is much cheaper than an 8xA100 80GB with a PCIe multiplexer 4U GPU server.�And the A100 cluster is actually slower in speed, generating around 15 tokens/s vs. the Mac Studio.

Mac is now the cheapest LLM developer tool you can buy. And we haven’t even looked at optimization with the 32-core NPU yet.

Buy more Apple shares :D

I hope you learned something today. See you next time!