Jin Daily AI Trivia – China Dominates Open-Source MoE Models

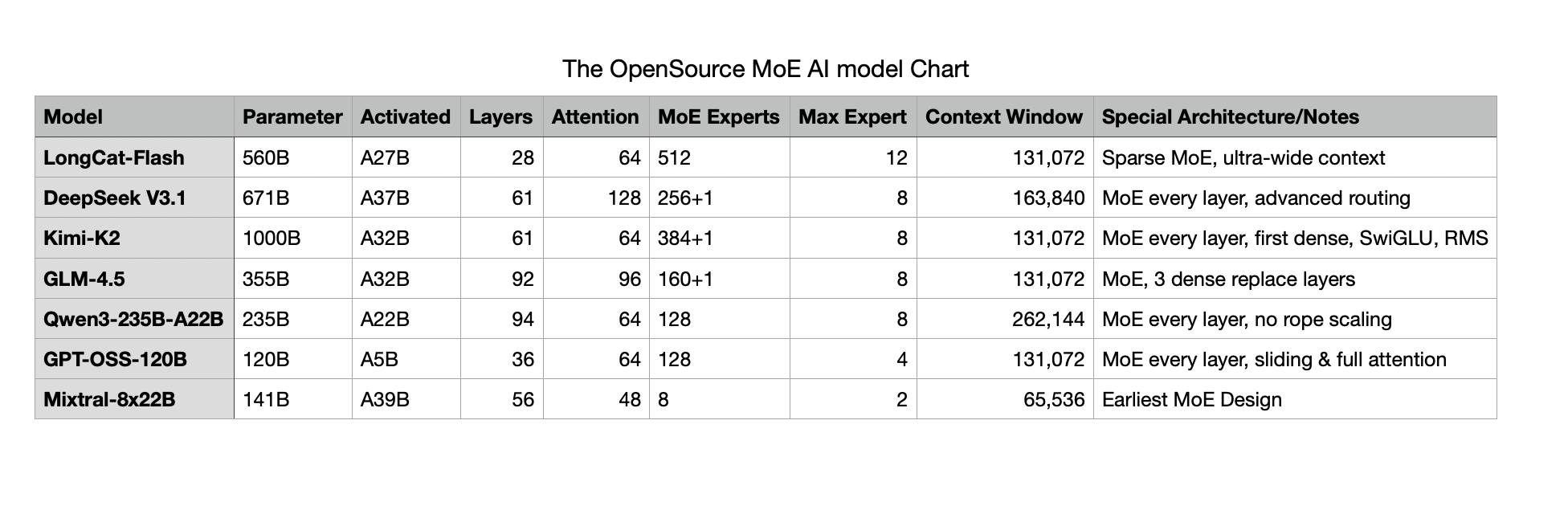

The latest state-of-the-art (SOTA) open-source Mixture of Experts (MoE) models are largely coming from Chinese companies.

Recently, Meituan (yes, the food delivery giant) released their MoE model called LongCat-Flash—and it once again surprised the AI research community. They managed to squeeze strong performance out of limited hardware, making it surprisingly usable with far fewer activated parameters.

The magic? Zero-Computation Experts.

Quick MoE refresher: instead of firing up all parameters for every token, the model only activates the “expert” networks relevant in that context.

LongCat goes a step further. It introduces what’s basically a “fake expert.” If the model thinks a token already looks good enough, it won’t route it to a real expert. Instead, it sends it to the fake expert, which either skips, reuses, or drops in a shortcut output. This saves both compute time and tokens.

The other innovation—Shortcut-Connected MoE (ScMoE)—is about making multi-GPU training and inference faster. Normally, MoE wastes time waiting for tokens to be dispatched across GPUs, processed, and then merged. ScMoE overlaps routing and compute so experts can work locally in parallel and sync results in the next step. Less waiting, more throughput.

Bottom line: LongCat-Flash hits 100+ tokens per second while activating only a fraction of its total parameters. Another example of how Chinese teams are finding clever shortcuts to make giant models practical.

And don’t forgot the Taikor, DeepSeek-V3.1, the faster cheaper hybrid thinking mode is basically the key essence of GPT-5 -> AI model should know by itself when to switch to thinking mode and when not via their own control flag.

Now all the Western SOTA model is mostly close-source, I wonder what will happen in next 2-3 month time :)

Hope you learn something new today!! See Ya!!

If you have any question about the chart below, AMA (Ask me anything) in the comment !!