Jin Daily AI Trivia: iPhone 17 Pro (A19 Pro) GPU with Neural Accelerator can run LLMs with ease 🚀

Jin Daily AI Trivia: iPhone 17 Pro (A19 Pro) GPU with Neural Accelerator can run LLMs with ease 🚀

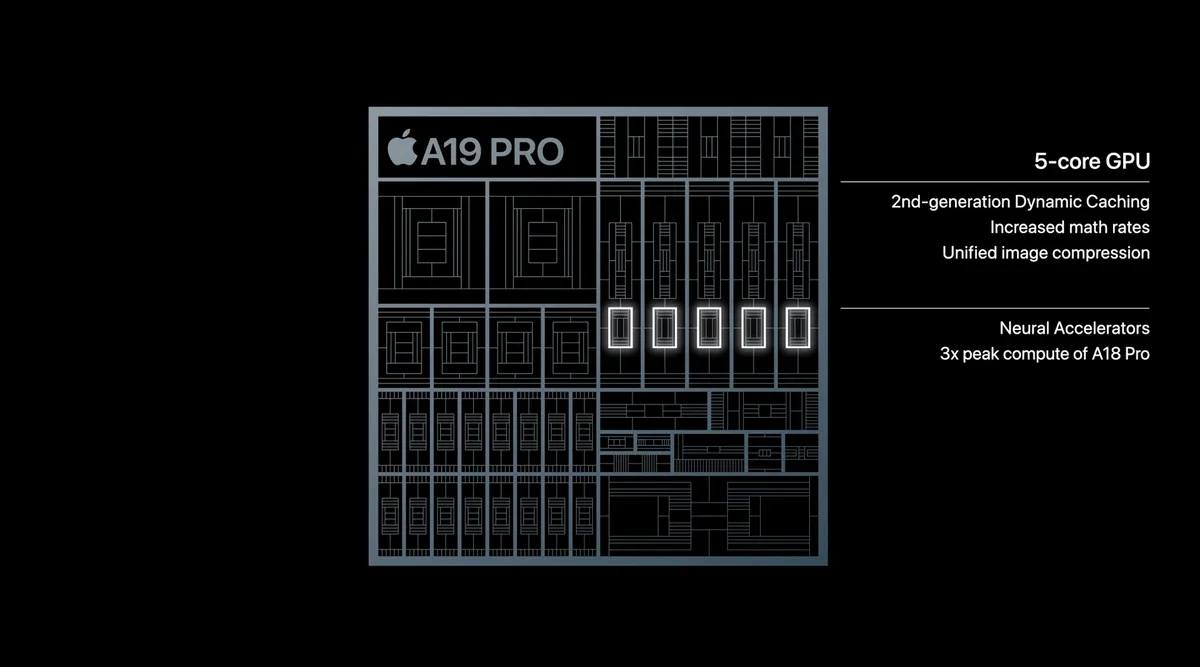

Apple’s new A19 Pro GPU comes with a Neural Accelerator — Apple’s take on Tensor Cores — and it seriously boosts AI inference speed.

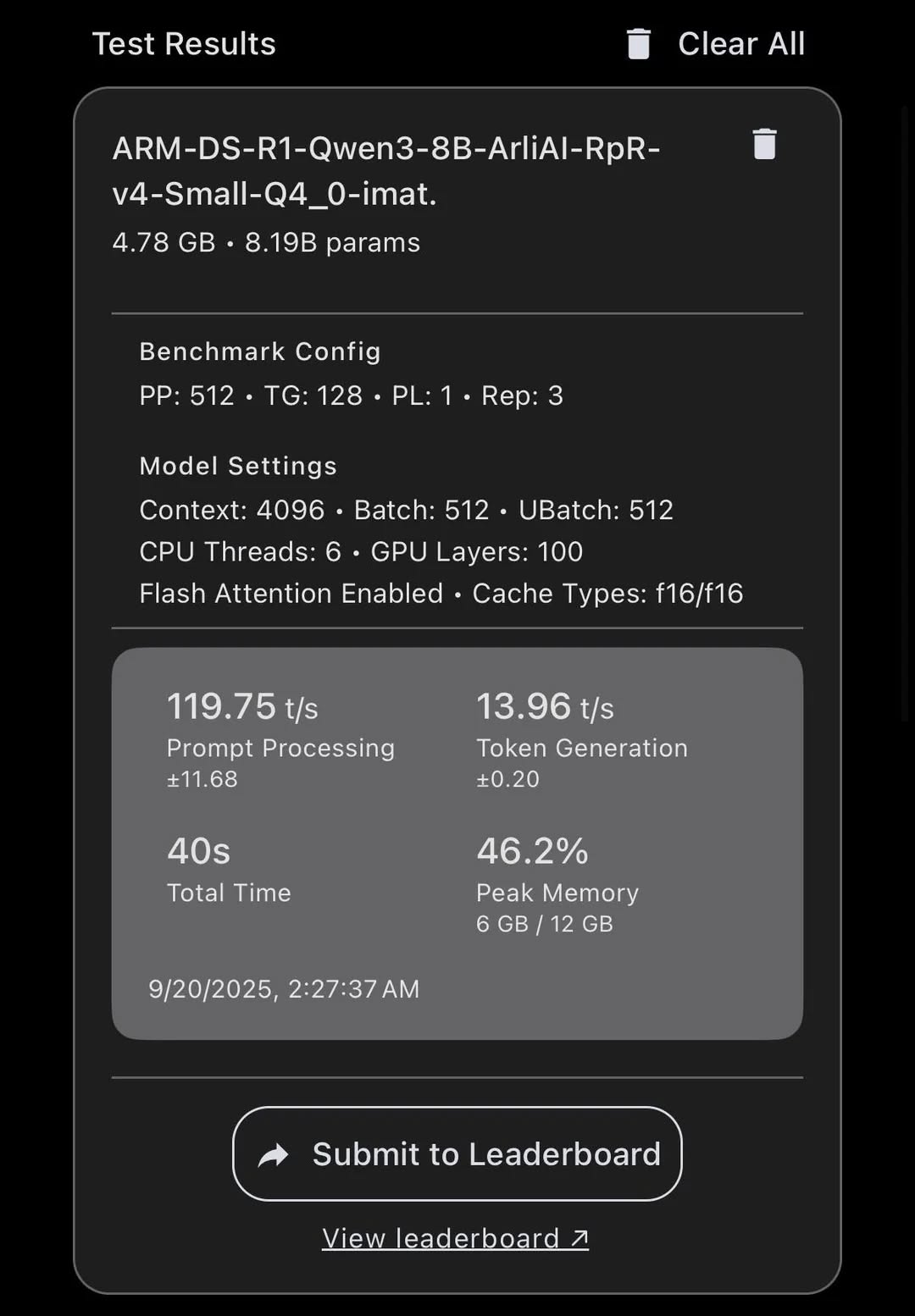

First tests are already out: running Llama.cpp on GPU MLX with Qwen3-8B hits 14–15 tok/s. That scales to about 50–60 tok/s for a 2B model — totally usable for on-device VLMs or text generation. Plus now all A19 Pro come with 12GB RAM by default, with desktop class memory bandwidth (80+GB/s)

Benchmark (Qwen3-8B Model)

M1: 11.4 tok/s M4 Max: 79 tok/s RTX 4090: 135 tok/s

🔥 This means we’re literally holding M1-class AI performance in the palm of our hands. How crazy is that !!!