Jin Daily AI Trivia – OpenAI “GPT-Gate” Scandal: Model Choice Is Just an Illusion

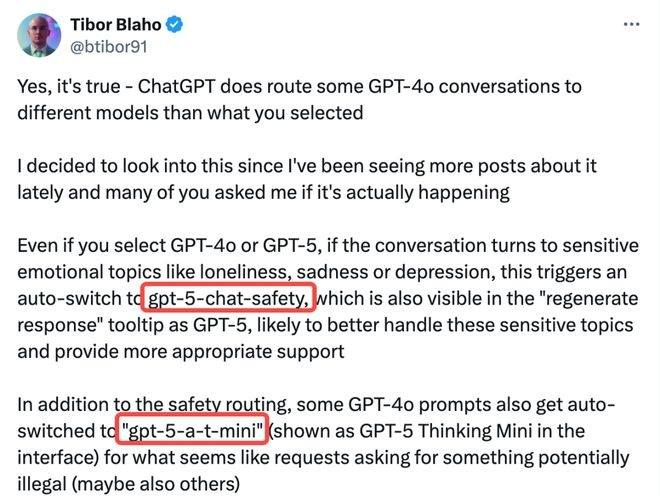

OpenAI has been quietly downgrading ChatGPT Plus and Pro users without their knowledge. Even if you explicitly select GPT-4o or GPT-5, the system can silently redirect your requests to weaker models (gpt-5-chat-safety or gpt-5-a-t-mini) whenever it detects “sensitive” or “emotional” topics. In practice, that means anything remotely risky gets censored through a hidden model swap.

So while the interface pretends to give you options—GPT-4o, GPT-4.5, GPT-5—the reality is that OpenAI decides what you actually get. Paying customers have no transparency, no recourse, and no way of knowing when their conversations are being downgraded.

This scandal cuts straight to the heart of trust. If a paid service can silently override user choice, then what exactly are you paying for? It’s also a stark reminder of why open-source AI matters—because closed systems can, and will, change the rules whenever it suits the company.