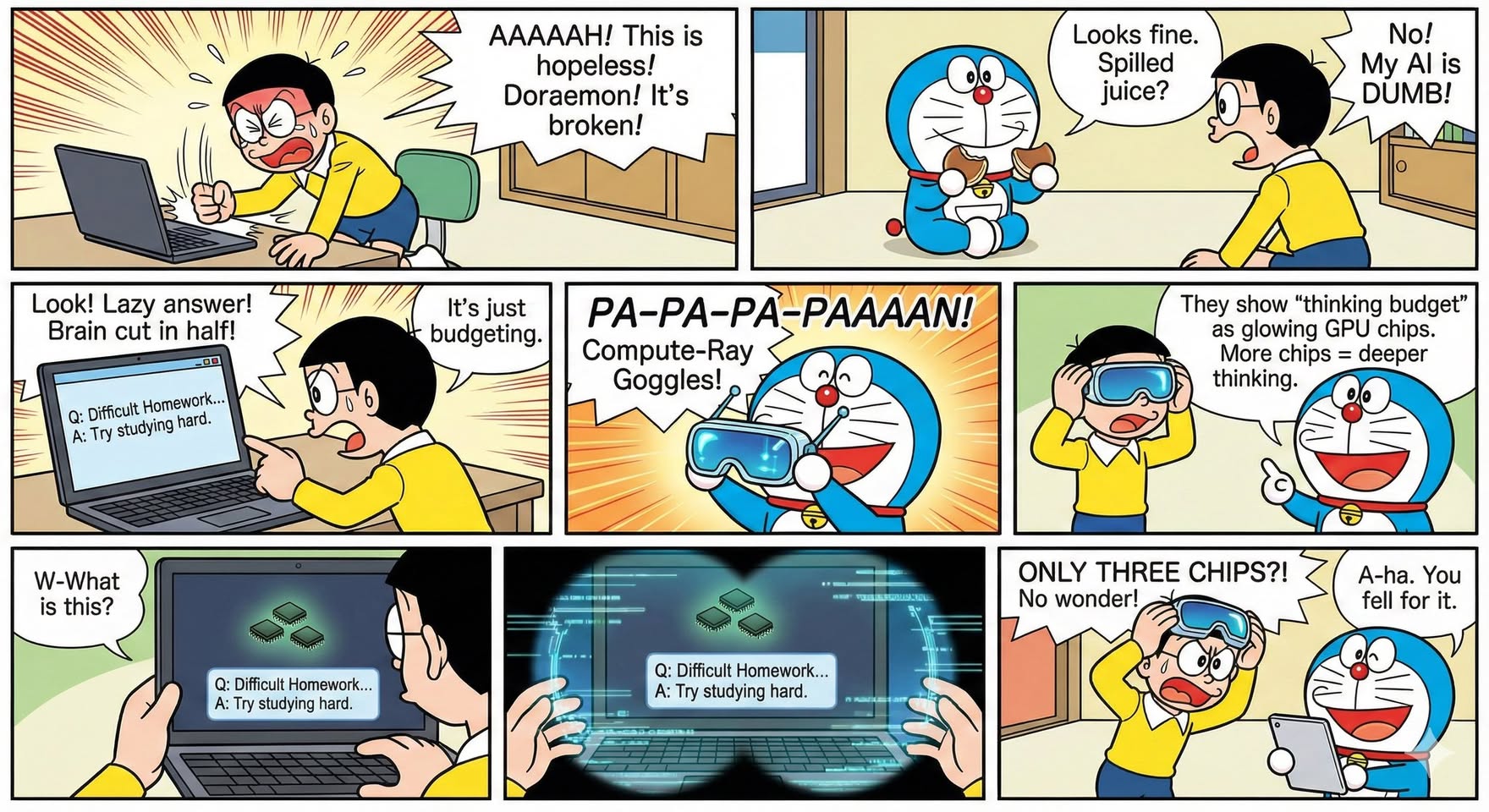

Jin Daily AI Trivia: Is GPT Auto Mode a Big Scam?

After the recent ChatGPT 5.2 update, I noticed something as a Free plan user: my answers felt noticeably worse. Classic enshittification vibes.

So I decided to dig deeper—and I found something interesting.

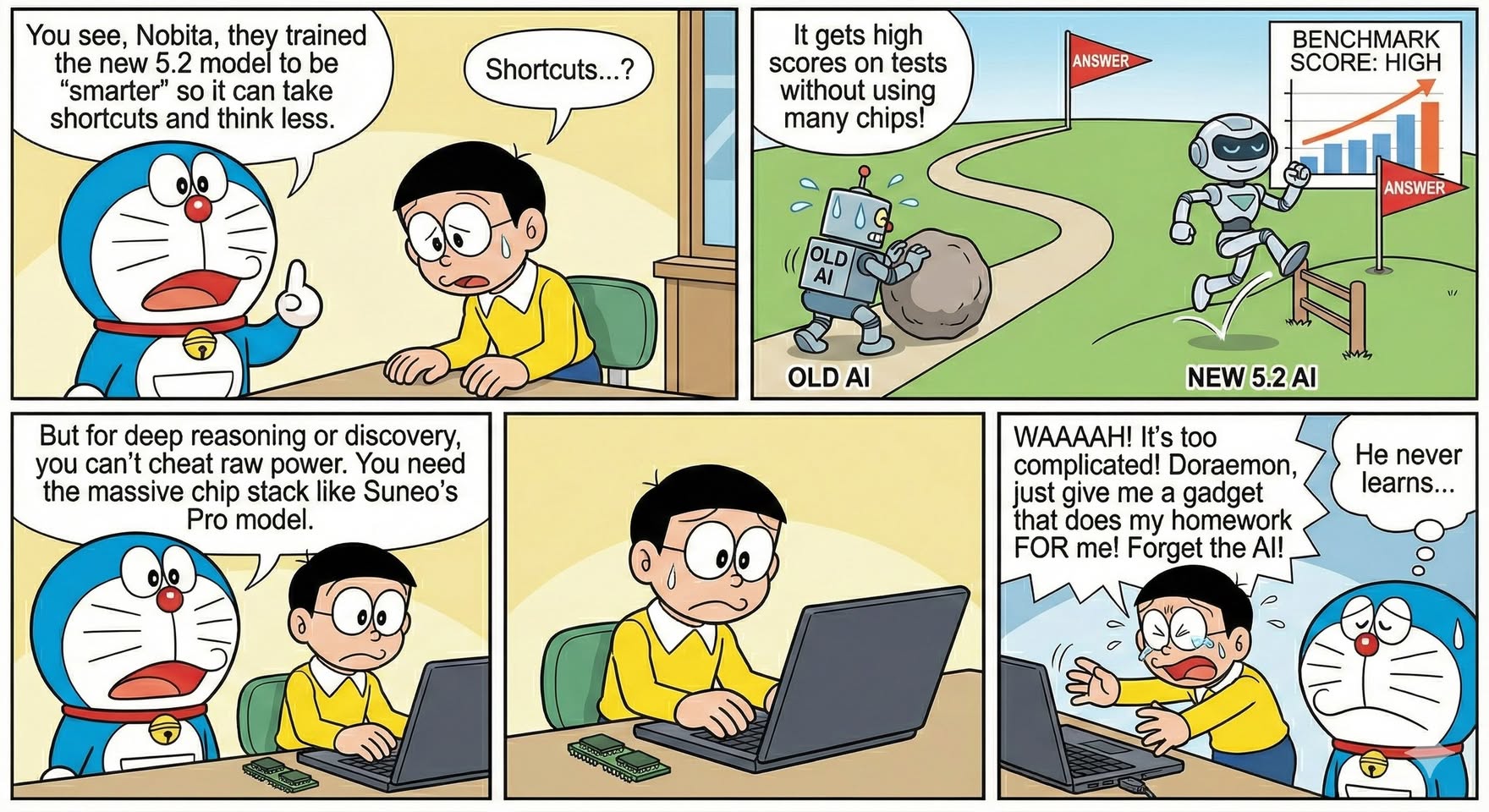

My suspicion turned out to be right: 4o is actually more expensive to run than GPT-5.x, and Auto mode is likely where the cost-cutting happens.

Here’s what I found:

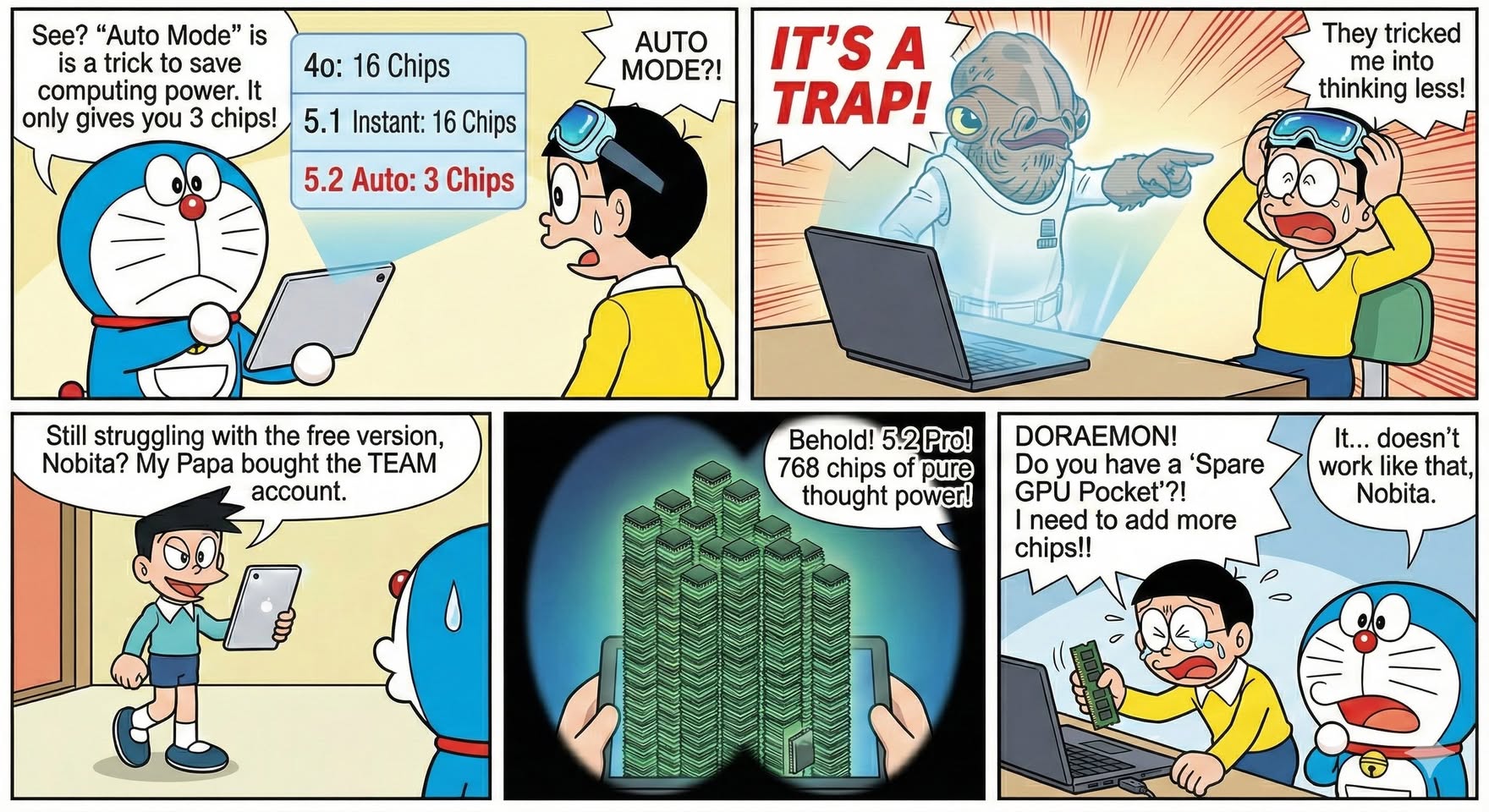

GPT-4o had a thinking budget of 16 layers GPT-5.2 Auto mode? Only 3 layers GPT-5.2 Instant (manual selection) jumps to 8 layers

For Free users, the ceiling appears to be 8 layers, you not getting anything more than that. but Auto with 3 layer? that real bs move.

That’s when it clicked: you should never blindly trust or USE Auto mode especially the 5.2 model.

Observed Thinking Layers by Model (As a Paid user)

4o: 16 layers

5.2 Auto: 3 layers 5.2 Instant: 8 layers 5.2 Thinking: 64 layers 5.2 Pro: 768 layers

5.1 Instant: 16 layers 5.1 Thinking: 96 layers 5.1 Pro: 512 layers

CoT layers = how many reasoning layers the AI uses before producing an answer More layers = more thinking time + more compute cost

Auto mode isn’t “smart optimization”—it’s aggressive cost control. If you care about answer quality, manual model selection matters more than ever.

🤔 hope you learn something new today.