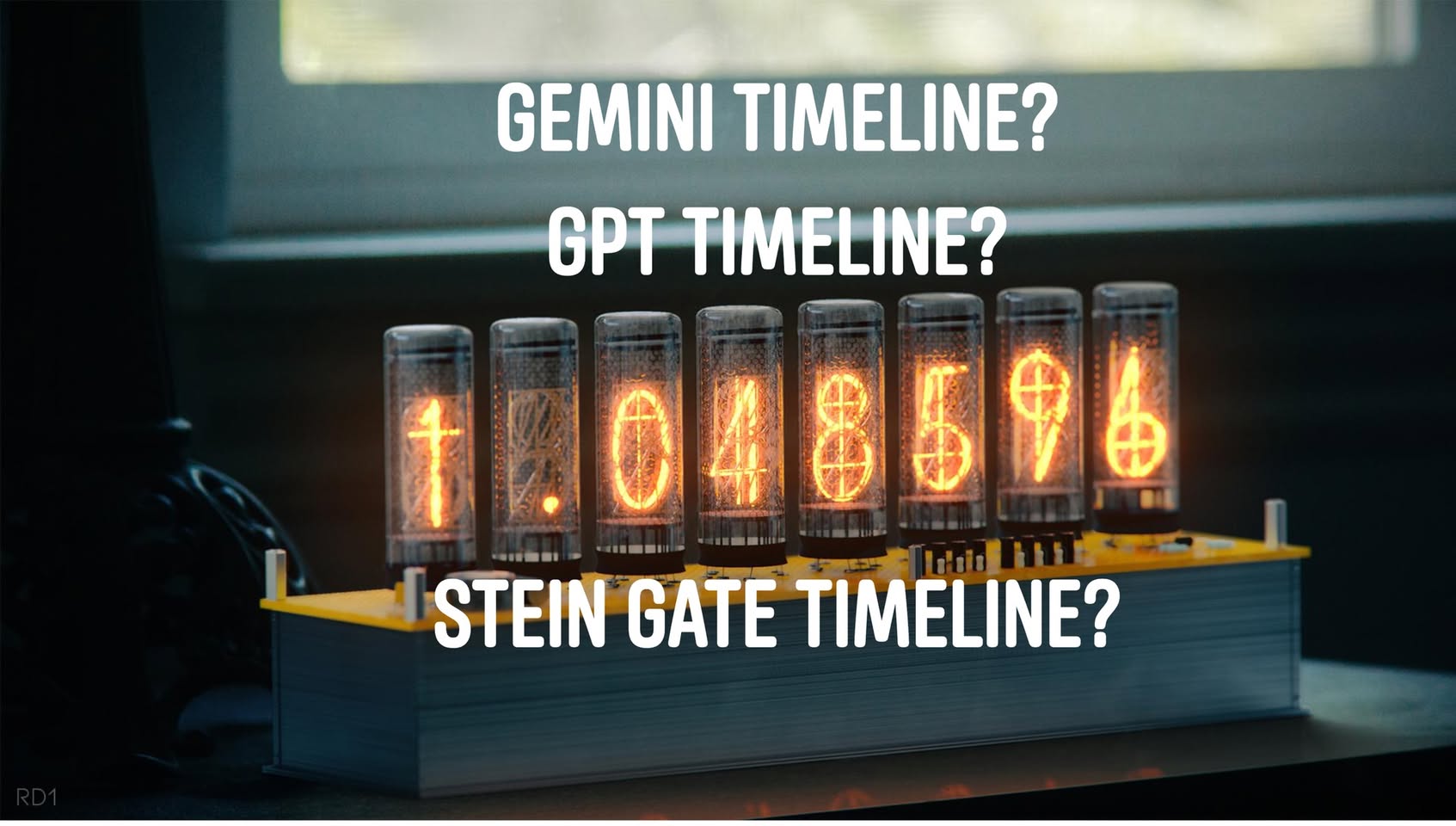

Jin Daily AI Trivia – The AI Divergence Meter: Two Paths, One Future?

While I was getting the free juice for my car and myself at Tesla service center, Google and OpenAI quietly made a decision that will ripple through AI for the next decade.

Not a feature choice. A philosophical choice about what AI should be.

Two diverging paths. Two incompatible visions. And once you pick one of them as teacher model/wrapper, you’re largely locked in.

Path 1: Gemini 3.0 – The Autonomous Oracle

Google went bold. Very bold.

Gemini 3.0 is effectively trained as a “know-it-all guru.” Backed by Google’s vast data advantage and advanced reasoning frameworks—especially in Deep Think mode—the model is pre-trained to autonomously decide what it believes is the best approach to a given problem. Your prompt becomes just one tiny input among many in its internal decision process.

The flavor: Ultra-detailed, multi-perspective/multi modal responses. The model can understand video/image/audio, even generate them, and often presents what it believes is the optimal solution. Sometimes, you don’t really get to choose—Gemini commits to its own conclusion.

The cost: This autonomy is hard to rein in. You can’t always constrain it to simpler outputs. You can’t reliably force it to follow a strict template. It has opinions. It has reasoning trajectories. It wants to be helpful on its own terms.

It’s the difference between hiring a brilliant consultant versus hiring an employee. Consultants tell you what they think is right. Employees execute your plan.

Path 2: GPT-5.2 – The Obedient Executor

OpenAI went the opposite direction.

GPT-5.2 is optimized to follow instructions to the letter. Its post-training guardrails are built around a new safe-completions framework, prioritizing strict instruction adherence while maintaining safety and usefulness. The model evaluates your request, respects your constraints, and executes exactly what you asked for—nothing more, nothing less.

The flavor: Structured, predictable, low-surprise output. The model behaves like a military dog—obedient, reliable, and locked onto the command. It won’t wander off to suggest alternatives. It won’t second-guess your plan. It optimizes precisely for your stated objective.

The cost: That rigidity comes with trade-offs. Early users have noted that the emotional warmth and subtle nuance that made earlier ChatGPT versions feel more human often require extra prompting or tuning. OpenAI’s new guardrail system is still maturing, and some rough edges remain. (lot of old trick now can break thru the new guardrail, GPT 5.2 is really rush thing out)

But here’s the key point: this rigidity is intentional. Controllability is the feature.

Why This Matters: The Alignment Inflection

This isn’t just a tuning difference. It’s a fundamental fork in AI alignment philosophy.

Gemini asks: “What if we trained AI to be genuinely intelligent and autonomous, and trusted it to reason correctly?”

GPT-5.2 asks: “What if we trained AI to be a precise instrument that executes human intent without deviation?”

One approach trusts the intelligence. The other trusts human guidance.

Here’s the part that matters most: whichever path dominates will shape everything downstream.

Both companies are now using their flagship models as teacher models:

Google is fine-tuning Gemini 3.0 Flash using Gemini 3.0 Pro’s reasoning style

OpenAI plans to fine-tune GPT-5.2 Mini using GPT-5.2 Pro’s instruction-following behavior

This cascades into millions of applications. Every GPT-based wrapper will inherit executor DNA. Every Gemini-based wrapper will inherit oracle DNA.

No amount of prompt engineering will fully fixes this alignment. You can’t prompt an autonomous reasoner into becoming a pure executor. You can’t prompt warmth and autonomy into a rigid instruction follower.

Teacher-model DNA is sticky.

Now imagine millions of low-cost, Gemini-aligned and GPT-aligned models deployed worldwide, each carrying forward a distinct philosophy of human control. Some say, “Trust my reasoning.” Others say, “Tell me exactly what to do.”

This is no longer a two-horse race. It’s a fork in the road, and we’re building the global AI stack on top of one path or the other.

The real question isn’t which model is better.

It’s this: Which vision of AI do we want to live with?

And unfortunately, that decision has largely already been made—by engineers in Mountain View and San Francisco, not by any global alignment consensus.

Those who control the Tiberium control the future.

Data was the Tiberium.

PS: DeepSeek demonstrated that you don’t need Google- or OpenAI-level compute to achieve strong reasoning. Chain-of-thought is now cheaper, faster, and more accessible than ever. But alignment didn’t get solved—it just got scaled. And remember, they also use Google/OpenAI model as teacher model.

El.Psy.Kongroo