Jin’s Daily AI Trivia: Apple + EXO Fix the Mac AI Cluster Problem

I’ve always said the best local inference machine you can buy is an Apple Silicon Mac—especially if you’re planning to run a small to medium model on-prem. It makes a lot more sense than an overpriced DGX Spark / GB10 unit that overheats and delivers very low tokens per second.

That said, once a model is too large to fit on a single Mac, clustering becomes the next option—but until recently, Mac AI clusters had a few major issues.

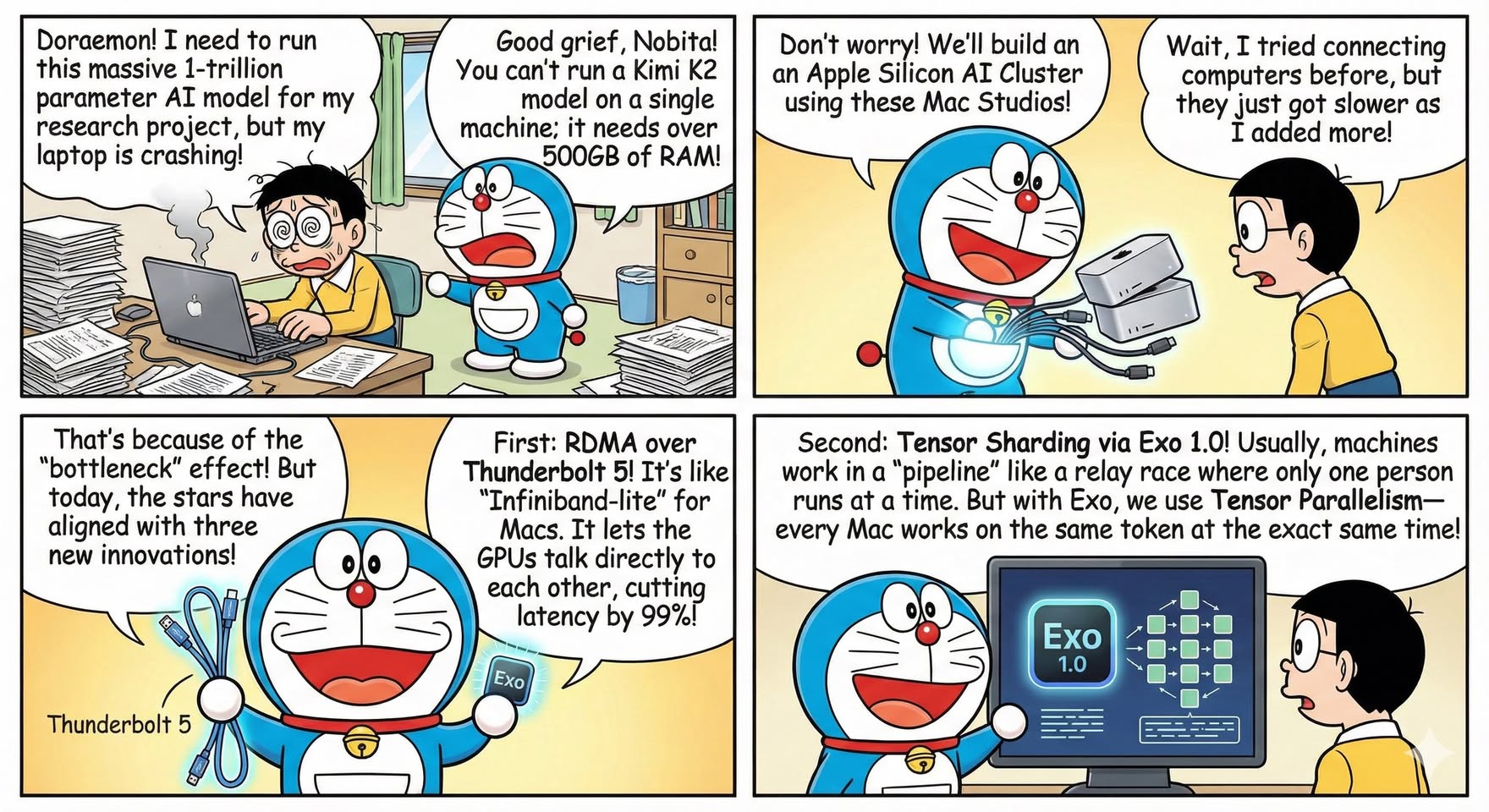

Previously, the biggest bottleneck was the networking stack (10Gbps or 40Gbps TCP/IP). The more nodes you added, the slower token generation could get, because every network packet had to pass through the full CPU → memory → GPU path on each machine. That overhead adds up fast.

Now Apple has dropped three bombshells that (mostly) fix this.

- RDMA over Thunderbolt 5

- Basically, direct GPU memory access—think NVLink-like behavior over TB5. Much less CPU overhead.

- Tensor sharding with EXO 1.0

- Instead of the old pipeline approach (where tokens get processed sequentially across nodes), Tensor sharding lets the workload be distributed more evenly so nodes can work in parallel more effectively.

- MLX Distributed

- An Apple Silicon–optimized framework that treats the whole cluster like a single logical accelerator.

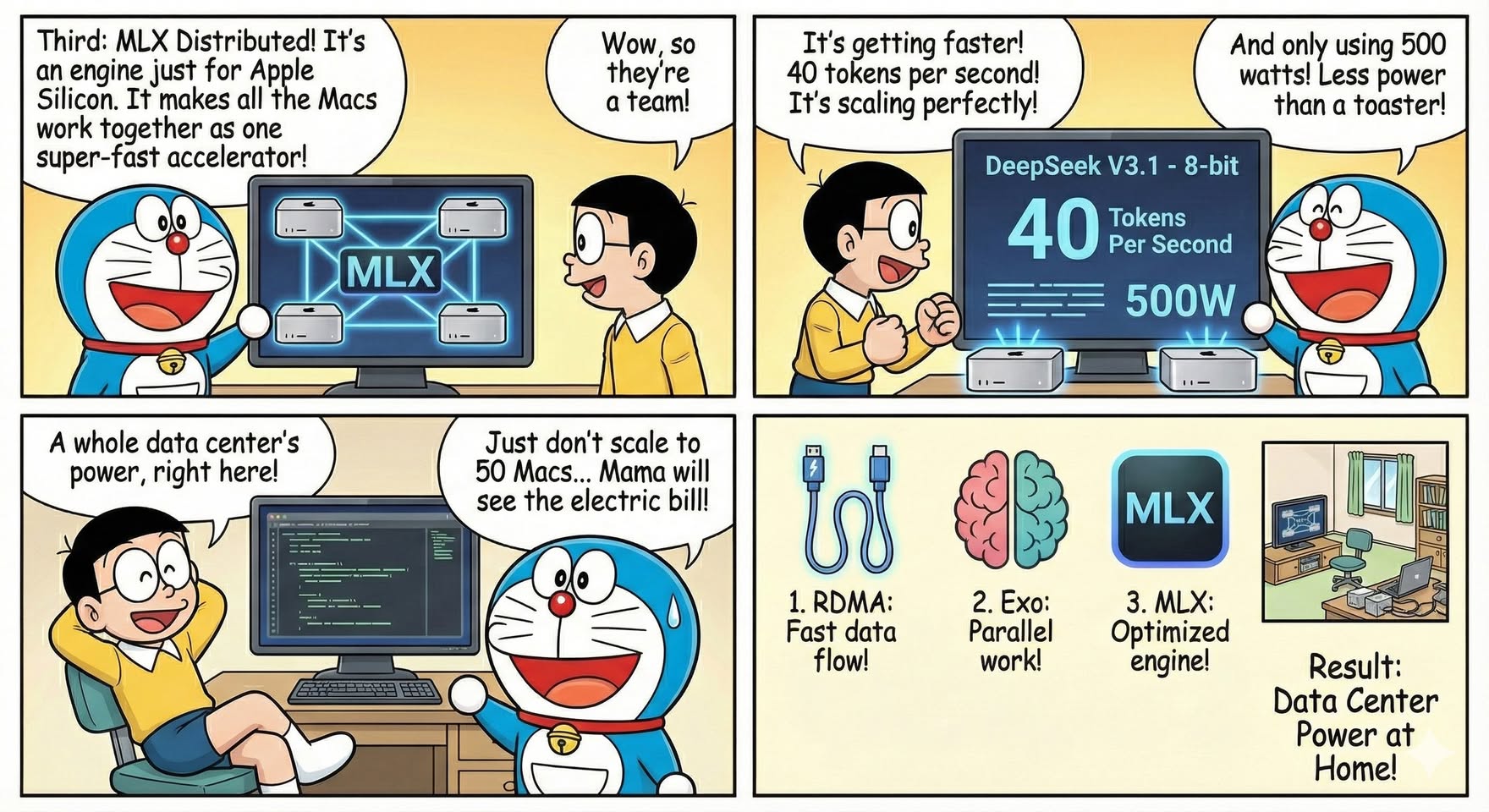

Summary

You can spend RM15,000 to buy two Mac mini M4 Pro (64GB each)—128GB total unified memory, with 273GB/s memory bandwidth per unit—and get 3–4× better performance than a NVIDIA DGX Spark / GB10 that costs RM18,000, running the same GPT-OSS-120B. (remember GB10 real life memory bandwidth is less than 200GB/s)

Or you can spend RM22,500 to buy three Mac mini M4 Pro for 192GB total memory, which is more than an NVIDIA H200 (141GB)—and that single GPU server can cost around RM170,000. Power draw is also dramatically different: under ~250W for the Mac mini cluster versus ~2kW for a single high-end GPU server.

And once the M5 Mac minis are out, prices will likely drop even further.

Let me know if you want me to explain the main limitations of Apple Silicon for AI 😄