Daily Jin AI Trivia: 🚀 YTL AI Lab launches ILMU AI Model fine-tuned for Malay language

Daily Jin AI Trivia: 🚀 YTL AI Lab launches ILMU AI Model fine-tuned for Malay language

YTL AI Lab just announced their latest ILMU multimodal AI model. It can generate images with Malaysian context and even produce audio responses in Malay.

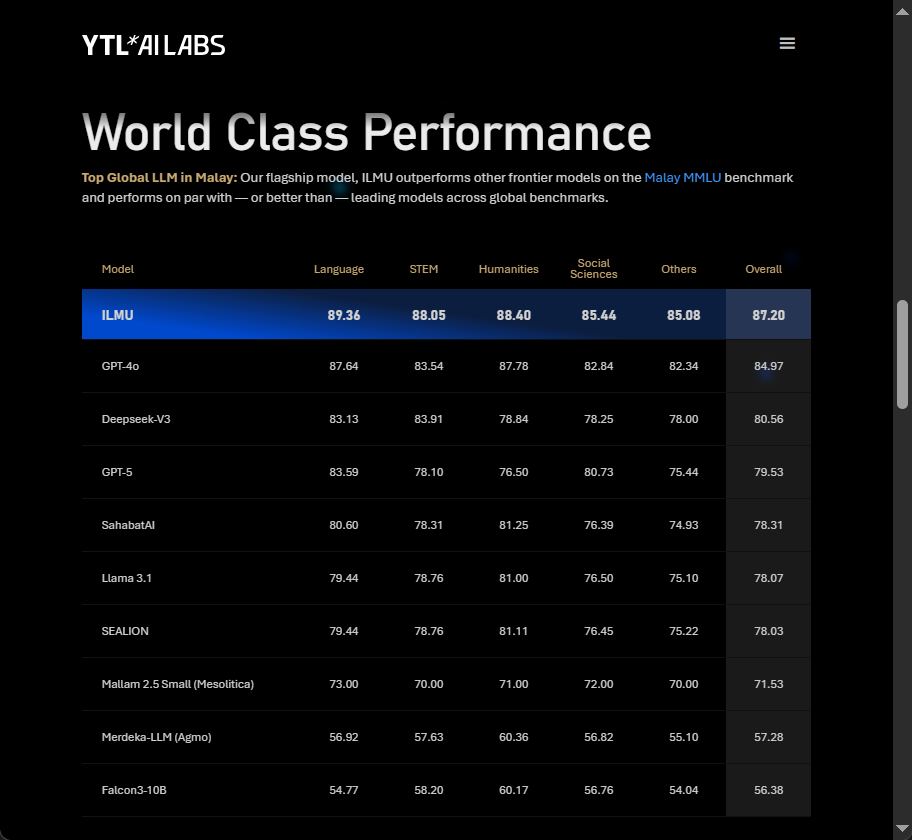

The best part? They’ve released their MMLU benchmark results, along with a specialized MalayMMLU (forked from IndoMMLU).

From the scores, YTL claims it outperforms all regional models — and even some SOTA models — for Malay language tasks. Congratulations to the YTL AI Lab team! 🎉

So, what can we learn here? (My hot take without knowing their actual design)

From my quick observation, the ILMU model seems to be based on Qwen2.5-72B. Why do I think so? Their MalayMMLU GitHub repo contains benchmarks for Qwen2.5-72B, and the CMMLU plus overall MMLU scores are very close. Interestingly, they didn’t list Qwen as a comparison model on their official website or system card — even though the GitHub data shows Qwen ranking among the top performers.

How did they make a text-only model handle image generation and audio output? My guess: they likely used a ViT image encoder + small projector (linear/MLP). My bold guess would be EVA-CLIP ViT-G/14 + LN + Linear projector to add Qwen2.5-VL functionality, and Mesolitica Malaysian-Qwen2.5-7B-Audio-Instruct purely for the audio encoder (feature extractor), with a D_llm linear projector for audio output.

After that, it’s the tedious SFT and DPO work.

Still, pulling all of this together is a huge effort. Kudos to the YTL AI team and their infrastructure muscle. 💪

I hope you learn something new today !!! See Ya !!