Jin Daily AI Trivia: Jetson AGX Thor is not the AI machine for you

As with most Nvidia keynotes and non-GPU product launches (seriously, where’s the Digit Spark?), the recent Jetson AGX Thor announcement isn’t something you should get too excited about—especially when you see the price tag.

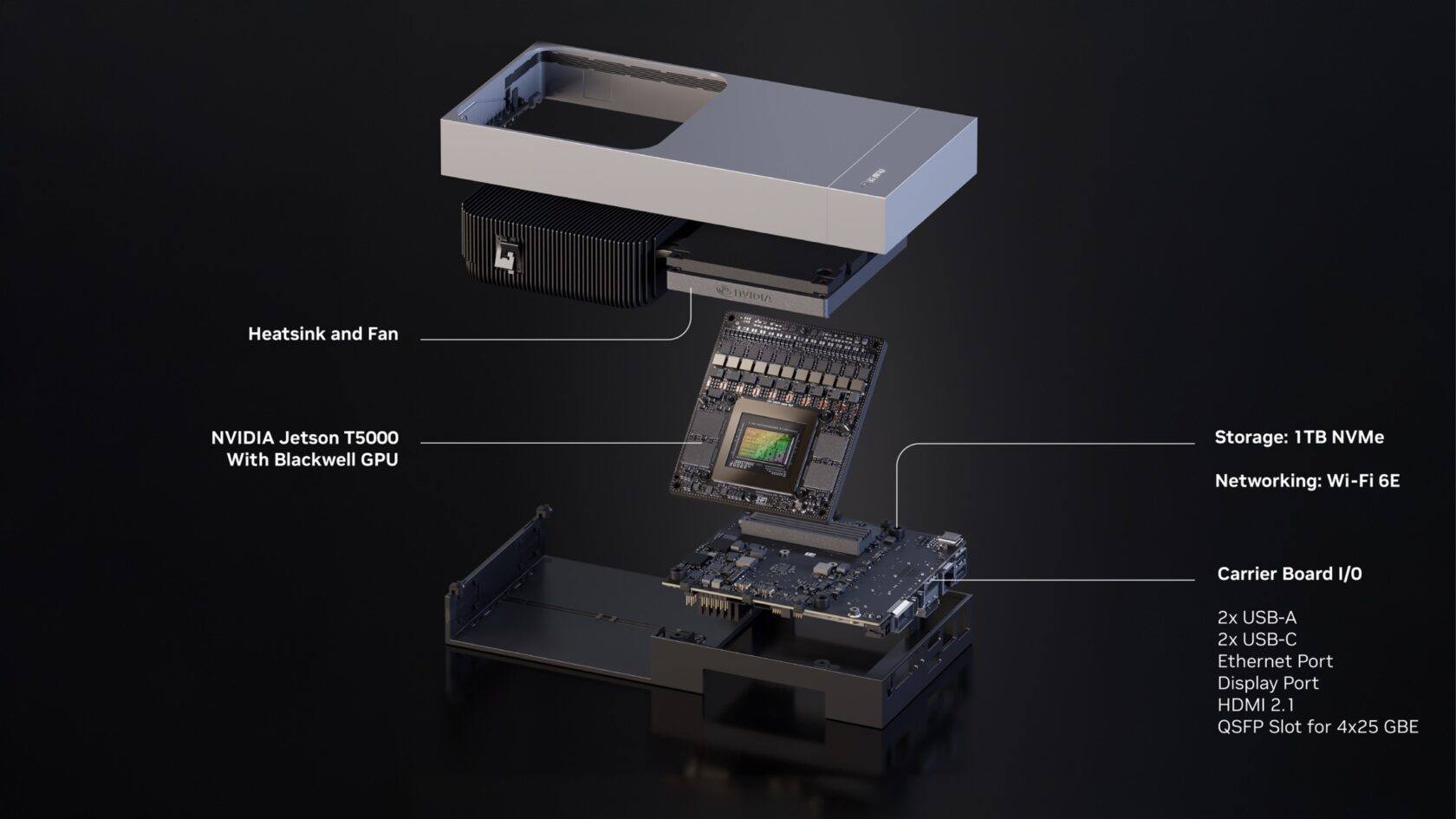

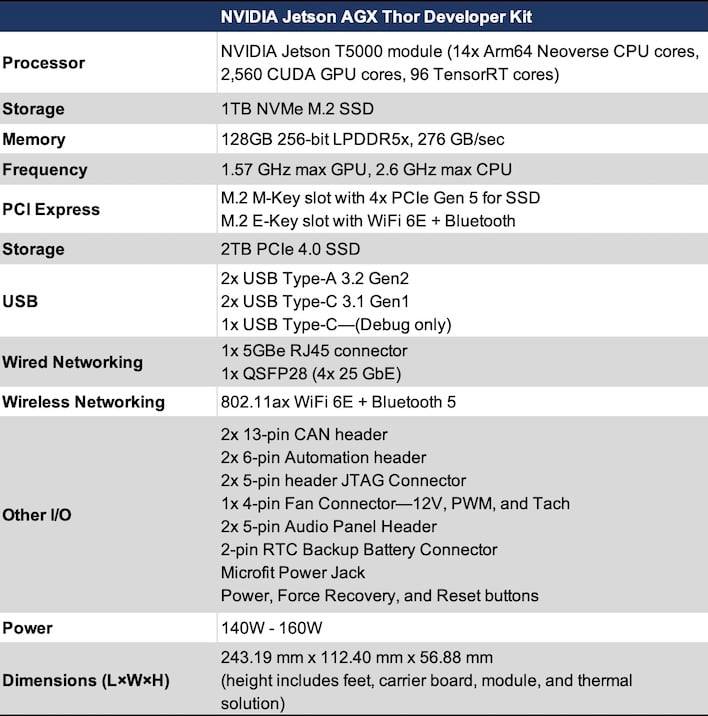

Local Nvidia developer kit distributor Cytron is listing it as coming soon for MYR 17,495. The device packs a Blackwell GPU (faster than an RTX 4080 in AI TOPS) and a 14-core Arm CPU. Sounds impressive, right?

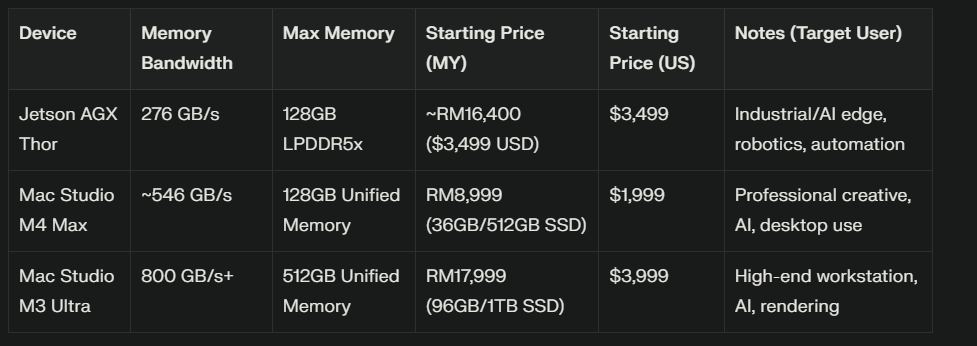

But here’s the catch: you shouldn’t buy this if your plan is AI training or inference. The reason is simple—very low memory bandwidth. That means you’ll get less than 20 tokens per second on 30B models. For the same price, an Apple M4 Max or M3 Ultra gives you way better performance and CPU power.

So what is the Jetson AGX Thor good for? It’s specifically designed for autonomous driving and robotics. Computer vision workloads benefit from its fast tensor cores, but they don’t need the massive memory bandwidth that LLMs demand.

This is where VLA (Vision-Language-Action) comes in. Think of VLA as the next step after LLMs and vision models—it combines seeing (vision), understanding (language), and doing (action). Perfect for robots: they see their environment, interpret it with AI, and then act—whether that’s navigating a street, picking up an object, or coordinating movement. The Jetson AGX Thor is well-suited for this kind of workload because it’s optimized for computer vision + real-time action, not for cranking out tokens in a chatbot.

Bottom line: Jetson AGX Thor is a robot brain, not a local AI workstation. If your goal is robotics or autonomous systems, it’s a great fit. But if you just want to run LLMs or train AI, you’ll get way more bang for your buck with Apple Silicon.

Hope you learned something new today! See ya!